Safety in numbers? The challenges of trust, privacy, and moderation for high growth online communities

Unless you’ve been living under a rock, you’ve heard of Clubhouse, the new kid on the social media block. The invite-only audio app has garnered a lot of attention lately, attracting big names like Elon Musk, Mark Zuckerberg, Robinhood CEO Vlad Tenev, and Bill Gates.

For the uninitiated, Clubhouse is a social media platform where users can create or join “rooms” in the tradition of the MSN chat rooms of old – except that the interactions are audio-based.

The app, which is still only available on iOS, has been downloaded more than 12.2 million times, and the hype has reached levels where PR firms are reportedly hiring “Clubhouse managers” to get their clients in on the action.

So what’s the catch?

Clubhouse and user data privacy

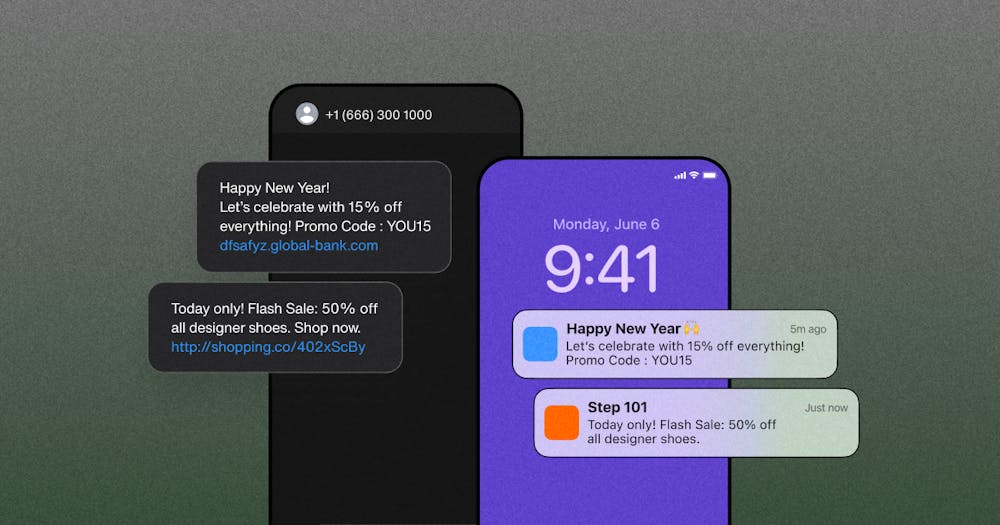

Like many other social media platforms before it, Clubhouse is already running into issues with privacy. Bloomberg recently reported that a user managed to stream audio from several supposedly private conversations to a third-party website.

There has also been a lot of talk about Clubhouse’s use of an SDK created by Shanghai-based software company, Agora, which exposes user data to potential access by the Chinese government.

Another Clubhouse privacy issue that has come to light in recent weeks is the lack of transparency around privacy controls in connection with user “follow” and “invite” recommendations. The app prompts new users to grant it access to their contacts to make it easier to find people to follow and build their social network.

While this may seem like pretty standard stuff for a social media platform, the problem is that users who didn’t grant permission are finding that within moments of creating their “anonymous” account, people they know start following them because they agreed to grant access to their contacts.

Your app is where users connect.

Clubhouse, safety, and moderation

The app has also faced criticism for lacking safety features that prevent harassment in the wake of a heated disagreement between New York Times reporter Taylor Lorenz and a VC named Balaji Srinivasan back in October 2020.

The incident revealed that the minds behind Clubhouse didn’t put much thought into how they would moderate discussions and ensure that the platform is an inclusive place where all users feel safe.

In the aftermath of this incident, Clubhouse updated its community guidelines and published a blog in which they discuss questions around moderation and safety tools and the challenges of implementing them.

While the app has some safety features, such as the ability to make rooms private, block users, and report incidents, it’s unclear whether its moderation tools are up to the challenge presented by its rapidly growing userbase. The company has said in a blog that it’s working on it.

Is beta too late to think about community moderation?

Clubhouse is not alone. It’s hardly the first social platform to fail to get moderation and user privacy right the first time.

Remember last year’s Zoombombing fiasco in which uninvited users accessed Zoom calls and bombarded participants with explicit pornography? And the debates about Twitter and other social media sites deplatforming Trump (or waiting so long to do so)? And the thousands of posts containing words like “oxygen” and “frequency” being erroneously flagged as requiring a Covid-19 fact check?

Every platform with a community is bound to have its own moderation and safety growing pains, so it’s probably a good idea to be prepared with community guidelines and moderation protocols before launching.

To be fair, Clubhouse is technically still in beta testing and only has about a dozen employees, so it’s to be expected that it still has some kinks to iron out.

But former Quora moderation and community leader Tatiana Estévez told Protocol that it may already be too late to try to go back and steer Clubhouse’s culture in the right direction:

“Culture will develop whether you want it to or not. Tone will develop whether you want it to or not. I think moderation and community should be part of the product. It should be seen as an integral part of the product from the onset.”

Once an online community has developed its own tone and culture, it may not respond well to attempts to police it after the fact.

The challenge of user-generated content moderation

It’s becoming increasingly clear that products that offer authentic human connection and community through features like forums, publishing functions, in-app chat, voice call, and video calling capabilities, are in high demand.

However, any platform that permits user-generated content (UCG) needs content moderation tools to filter and screen inappropriate or illegal content from bad actors and keep other users safe.

The problem is that moderation is famously difficult to achieve at scale.

In 2018, Vice called moderation at Facebook The Impossible Job, diving deep into the company’s struggles with striking the right balance between filtering and censorship, as well as the many challenges involved in moderating communities at scale.

These are some of the biggest challenges involved in community moderation:

- There’s simply too much content for human moderators to manage it all

- Manual moderation takes too long to be effective when it comes to near-real-time and live streaming moderation.

- Community guidelines grow and change constantly because it’s impossible to anticipate and prepare for every possible scenario.

- It’s almost impossible to keep large teams up to date on all the latest policies.

- The very act of using people to analyze other people’s content presents privacy issues.

- Content moderation can have an incredibly detrimental impact on the mental health of the individuals who do this work.

- Using AI to moderate UGC is a work in progress, and it requires a lot of (human) input to teach algorithms to accurately recognize inappropriate conduct.

These challenges are not limited to big social media platforms either. Anyone with a website or app that facilitates online community building needs to think about – and implement – robust moderation tools to protect the integrity of their brand.

Few companies are equipped to do this themselves, and even the ones that have the resources, typically outsource it because of the many challenges involved.

That’s why it’s wise to choose a solution that comes with robust privacy, safety, and moderation features right out of the box when building community features into your app.

Using pre-built moderation solutions for your online community

Creating an online community is easy. Pretty much anyone can do it. The hard part is driving up user engagement to a point where the social graph is integral to your product. The hardest part is implementing moderation to ensure that your community engages productively and safely.

If you’re building a product of which social interaction is an integral component – whether it’s an eCommerce marketplace, dating app, on-demand service, gaming platform, or any other platform with an online user community – you’d be wise to choose solutions that offer robust moderation features right out the box.

For instance, when it comes to in-app chat, voice, and video calling APIs and SDKs, Sendbird offers moderation features including advanced profanity filters as well as image moderation, spam protection, and tools that make it easy for moderators to freeze channels, mute or ban users – not to mention advanced encryption and security protocols to protect user data from bad actors.