Introducing Advanced Moderation: A complete moderation chat solution for AI, automation, and moderators

Introducing advanced chat moderation

✨ Key takeaways:

Advanced chat moderation: Sendbird introduces a comprehensive trust and safety chat toolkit for online chat that merges AI, automation, and moderators' insight.

Auto-moderation for chat: Sendbird unveils a robust moderation rule engine to automate User-Generated Content (UGC) moderation.

AI moderation for chat: Sendbird integrates Hive, the leader in AI moderation, to detect unsafe UGC.

Live human moderation: Sendbird tools up chat moderators with a review queue, moderation logs, and a live moderation dashboard to enhance moderation compliance and performance.

Trust and safety in online environments are critical in today's digital landscape. As the leading communications API platform for web and mobile applications, we’ve cultivated deep user engagement and community management expertise. Our robust real-time chat API and infrastructure powers global brands and bolsters vibrant social communities like Hinge. Today, we’re thrilled to announce our latest breakthrough: Sendbird's Advanced Moderation for chat user-generated content.

This innovative suite of chat moderation tools merges the best of community managers' insights with the precision and efficiency of moderation automation. It creates a robust, hybrid UGC moderation toolkit that helps online communities manage user content—digital customers across industries like marketplaces, media and entertainment, dating, social communities, healthcare, and gaming already use Sendbird's Advanced Moderation to enhance community safety.

“At Kakao Entertainment, ensuring a safe and vibrant community is our top priority. Sendbird’s moderation rule engine has been a game-changer for us, providing the flexibility and efficiency to tailor moderation rules to our unique needs. We’re excited to create an even safer and more engaging space where our users can confidently connect and communicate.”

Pina, Project Manager at Kakao Entertainment

Read on to discover how Sendbird’s Advanced Moderation transforms community safety. Let’s start by exploring the five pillars of our advanced chat moderation system that stand at the forefront of this revolution in online community management.

The five pillars of chat moderation

Content moderation follows a linear process, ranging from detecting a concerning behavior to evaluating the threat it creates to deciding to take the measured action that will enforce the community guidelines and keep the community safe. As a result, Sendbird's Advanced Moderation for chat is built upon five essential pillars:

Detection: Using pattern matching or artificial intelligence, Advanced Moderation can intelligently detect problematic chat content, from profanity to spam.

Evaluation: Advanced Moderation combines automated and human-based review to guide action decisions.

Decision: Advanced Moderation leverages pre-defined rules to process chat user content automatically, enforcing trust and safety policies to uphold community guidelines consistently.

Execution: Advanced Moderation takes real-time actions, such as muting or banning chat users, to ensure healthy real-time UGC conversations.

Logging: Every action is archived meticulously to offer transparency on every moderated interaction. Records enhance chat content moderators' accountability and allow content moderation practices to be improved over time.

Let's delve into a central component of Sendbird’s Advanced Moderation that automates the first four stages of this process: The Moderation Rule Engine.

Your app is where users connect.

The features of advanced chat moderation: auto-moderation, review process, and compliance feature breakdown

Sendbird Advanced Moderation has three features: a content moderation rule engine, a moderation review queue, and content moderation logs. Let’s explore each in detail!

1. Content moderation rule engine: Codifying trust and safety

What is a content moderation rule engine?

A moderation rule engine is a software component designed to automate the process of content moderation in online platforms. It operates based on a set of predefined rules and criteria that dictate what kind of chat content is acceptable or unacceptable within a digital community. These rules can be tailored to the specific needs and guidelines of the platform.

The rule engine is the heart of our advanced content moderation solution for chat. It enables the codification and automation of community guidelines.

How does a content moderation rule engine work?

The moderation rule engine scans chat user-generated content (UGC) such as text, images, videos, and comments. It automatically takes pre-determined moderation actions when it detects content that matches the criteria set in its rules (for example, profanity, hate speech, spam, slurs, or other forms of inappropriate content). These actions can include deleting chat messages, flagging them for review by human moderators, muting or banning the user who posted them, or sending alerts to the community manager.

What are the primary benefits of a content moderation rule engine?

The primary benefits of a content moderation rule engine include:

Efficiency and scalability: It can process large volumes of chat content much faster than human moderators, making it an efficient safety tool for web and mobile apps with high volumes of UGC.

Consistency: Uniformly applying the same rules across all chat content ensures consistent enforcement of community guidelines.

Customization: Platforms can tailor the rules to fit their community standards and cultural sensitivities.

Reduced workload for chat moderators: It handles routine content moderation tasks, allowing chat moderators to focus on more complex or nuanced cases.

Real-time moderation: It can moderate chat content according to community standards in real time, which is crucial to preserving the user experience.

What are chat moderation rules?

Chat moderation rules are the guidelines or criteria an online community sets to maintain a safe, respectful, and constructive environment. These rules dictate acceptable behavior and user content within the community. They are designed to:

Prevent abuse: To stop harassment, bullying, hate speech, and other forms of abuse.

Maintain quality: To ensure content is relevant, constructive, and valuable to the community.

Enforce legal compliance: To comply with privacy and data protection laws like CCPA or GDPR and regulations regarding content and behavior online.

Protect privacy: To safeguard personally identifiable information (PII) and prevent sharing sensitive data without consent.

Promote a positive environment: To foster a welcoming and inclusive space for all users.

Sendbird’s moderation rules follow a simple logical format:

When <an event is triggered>,

If <conditions are met>,

Then <actions are executed>

This simple logic allows the moderation rule engine to automatically and precisely decide when and how to take action based on the rules moderators or the content moderation council have devised before. Here is an implementation example:

When a message is reported,

If five unique users have reported a message in the last 5 hours,

Then delete the message and mute the sender for 5 minutes

Here is a quick summarizing table for triggers, conditional evaluation, and actions that the chat moderation rule engine encompasses:

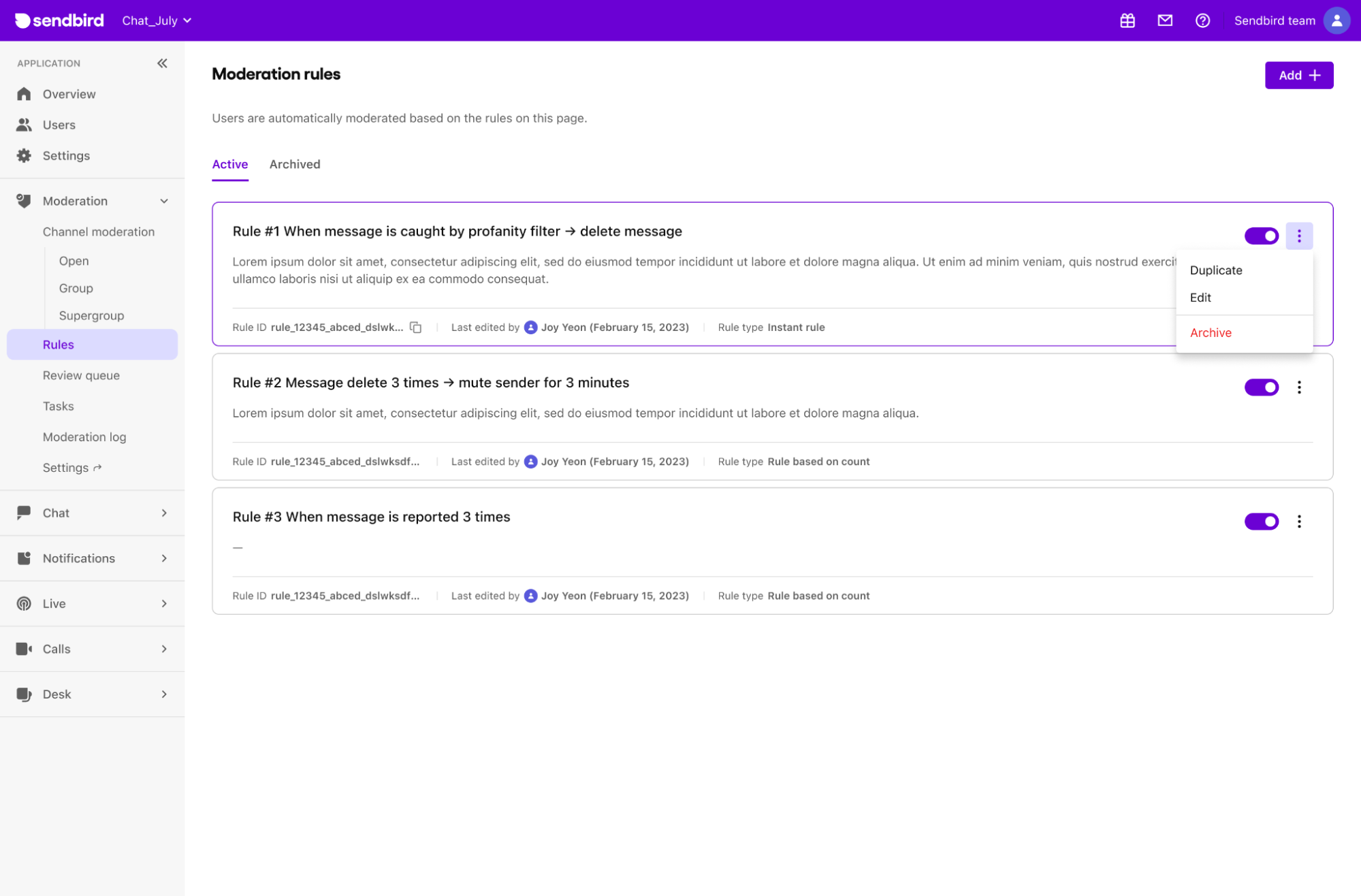

What is moderation rule management?

Moderation rule management is an essential component of community governance, instrumental in cultivating constructive online environments. It achieves this by establishing explicit trust and safety guidelines and diligently ensuring community members' adherence.

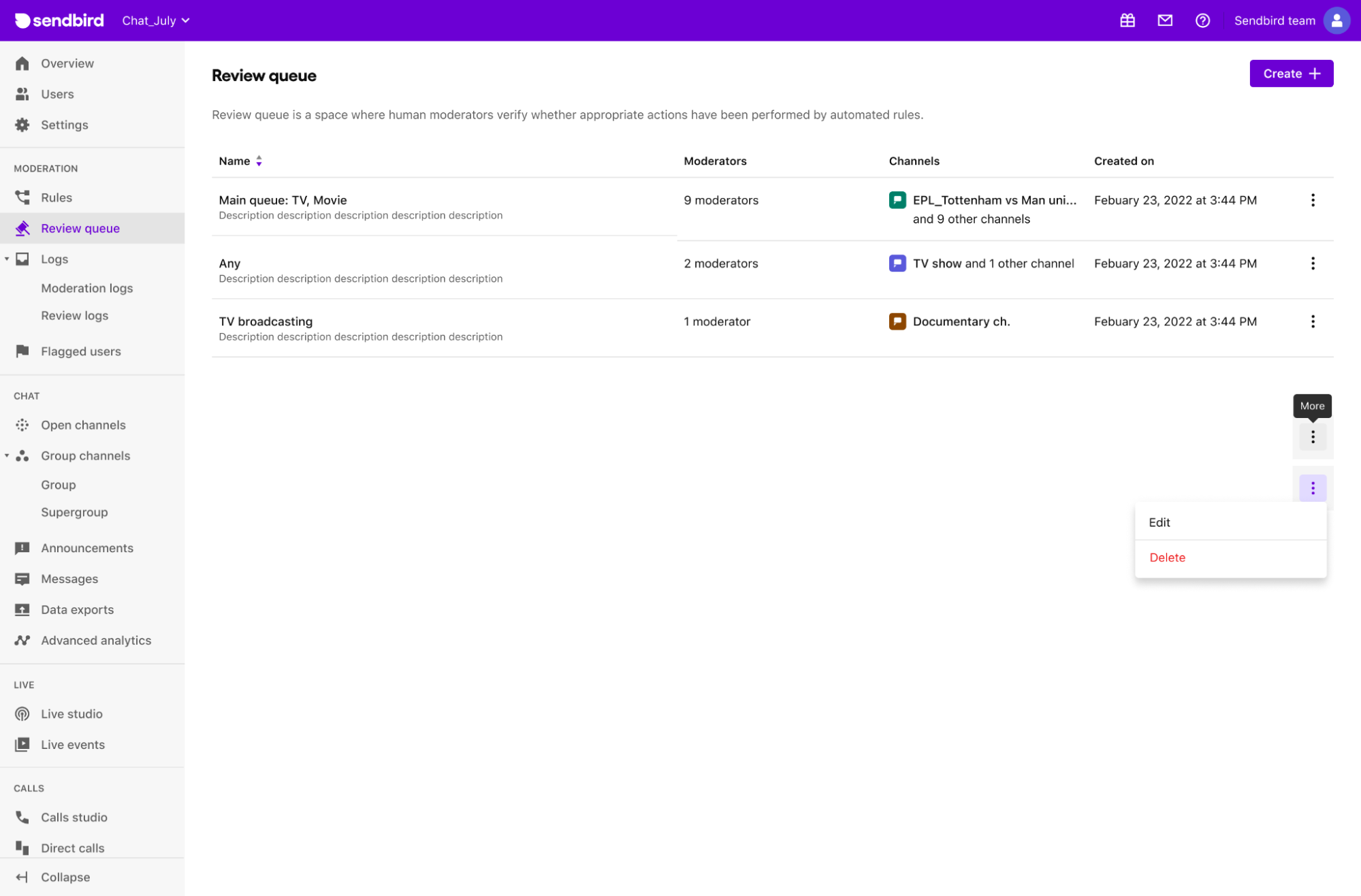

Chat moderation with Sendbird is streamlined through its dashboard, empowering content moderators to effortlessly create, activate, deactivate, modify, remove, or archive moderation rules. This flexibility allows for the dynamic refinement and enhancement of the content moderation system.

2. Moderation review queue: A content moderation review desk

What is a content moderation review queue?

A moderation review queue is a specialized system within online communities designed for the detailed review of user-generated content (UGC) flagged by automated chat moderation tools by online community managers. This system is a critical checkpoint to ensure all content aligns with the platform's community standards.

What is the purpose of a content moderation review queue?

The moderation review queue is an essential layer in the chat moderation process. When the automated rule engine flags chat messages as potentially violating community guidelines, this content is channeled into the review queue. Here, chat moderators, or in some cases, advanced moderation automated systems such as those powered by AI, undertake a thorough review. This process involves assessing the context and nuances of the messages to determine their appropriateness.

Key advantages of the content moderation review queue

Prioritization: The queue allows for categorizing and prioritizing moderated content, enabling moderators to address the most critical issues first.

Complexity management: The moderation review queue lists complex user content that is too challenging for an automated or AI moderation system so that chat content moderators can evaluate it and ensure fair and appropriate processing.

3. Content moderation logs: Centralized and searchable

What are chat moderation logs?

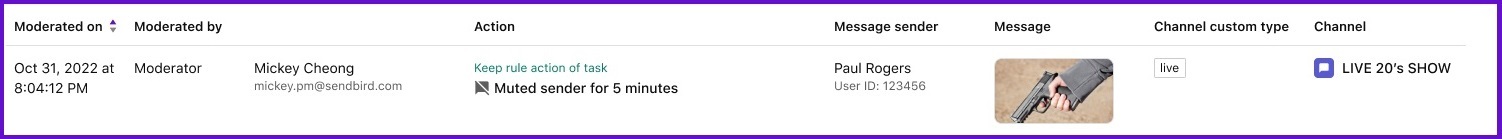

Chat moderation logs represent a comprehensive database of all moderated content within a chat platform. These logs serve as a detailed record, chronicling various aspects of moderated content and actions taken by chat content moderators.

What is the functionality and importance of chat moderation logs?

The primary function of chat moderation logs is to offer a transparent and accessible view of content moderation activities. They capture and store critical data such as the nature of the content, the time and date of content moderation actions, details about the content manager involved, the specific actions taken (like deletion, muting, or banning), and the context within which these actions occurred, including the relevant chat channel and user information.

Key features and benefits of content moderation logs:

Centralized data repository: Moderation logs are a centralized source for all moderation data, making tracking and managing content moderation activities across the platform more accessible.

Search and filter capabilities: With advanced search and filter options, chat moderators and community managers can quickly locate specific incidents or patterns, facilitating efficient oversight.

Enhanced accountability and transparency: These logs provide a transparent and accountable record of all content moderation actions, ensuring that moderators' decisions can be reviewed and audited.

Compliance and regulatory adherence: Maintaining a detailed record of content moderation actions, these logs help ensure that the chat platform adheres to legal and regulatory requirements.

Insightful data for continuous improvement: The log data analysis can reveal trends and insights, aiding in continuously refining content moderation strategies and rules.

Training and dispute resolution: Chat moderation logs are invaluable for training new moderators and resolving disputes, offering concrete examples of past content moderation challenges and how they were handled.

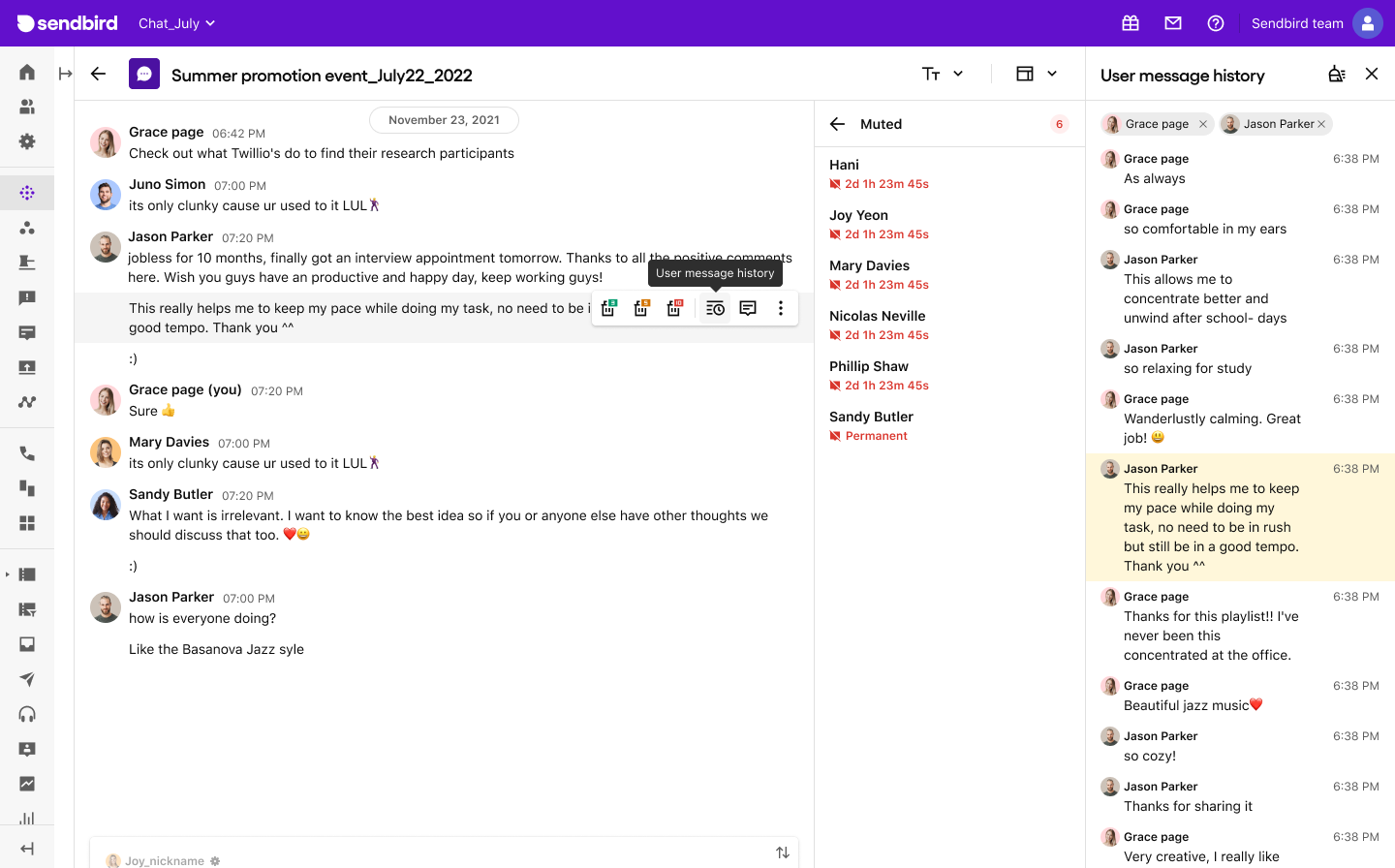

Hybrid chat moderation: Complementing automated chat moderation with live moderator interventions

As powerful as chat auto-moderation can be, there are instances where the nuance and complexity of certain cases necessitate human judgment. In these situations, the rule engine hands the cases to human moderators. This forms the core of hybrid chat moderation.

What is hybrid chat moderation?

Hybrid chat moderation refers to a method of user content moderation that combines both automated tools and human oversight. This approach manages user-generated content on web and digital platforms, such as social media, forums, and chat applications.

Sendbird's Advanced Moderation includes a live chat moderation dashboard to empower content moderators to step in seamlessly when the automated moderation can't address ambiguous or unforeseen situations. This hybrid approach ensures a more adaptable, thorough, and effective UGC moderation approach that boosts community trust and safety in online interactions.

AI moderation: enhancing moderator support with Hive AI moderation

What is AI moderation?

AI moderation, or artificial intelligence moderation, refers to using machine learning algorithms and artificial intelligence technologies to analyze, filter, and manage user-generated content in digital platforms such as social media, online forums, chat applications, and websites. AI moderation tools are designed to detect and flag various types of content, including slurs, spam, sensitive content (violence, nudity, violence, etc.), misinformation, and compliance infringements.

What are the benefits of AI moderation?

AI moderation benefits include scalability, efficiency, consistency, and ongoing improvements.

- Scalability: AI can handle large volumes of content in real time, making it scalable for platforms with millions of users and extensive user-generated content.

- Efficiency: Automated moderation processes can quickly flag and address problematic content, reducing the burden on human moderators.

- Consistency: AI algorithms apply consistent moderation standards across all content, helping maintain a fair and uniform approach to content management.

- Improvement: AI systems can learn from data and user feedback, improving accuracy and effectiveness.

What are AI moderation limitations?

AI moderation also has limitations and challenges. While AI algorithms are becoming more sophisticated, they may still struggle with nuanced language, context-specific content, and cultural variations. Human oversight and intervention are often necessary to handle complex cases, ensure fairness, and address false positives or negatives generated by AI algorithms.

Integrating Hive moderation with Sendbird

The Sendbird Hive integration is native. All you need to do is select it in the Sendbird dashboard. Hive AI will automate the detection of complex behaviors and content that may not be explicitly profane but still violates your community guidelines. This integration allows for more nuanced moderation, maintaining a clean and safe environment for user interactions. You can decide on different types of actions, such as putting the messages on hold, replacing and sending them, or allowing them to go through while the review process is happening.

Enhance your community's safety with Sendbird Advanced moderation for chat.

Sendbird's chat advanced moderation for user-generated content marks a significant leap forward. It is a complete kit combining AI moderation, auto-moderation, and all the tools moderators need to be built into intuitive software. This hybrid approach merges the precision and speed of AI and auto-moderation with the nuanced understanding of community moderators, setting a new benchmark in community safety.

Join leading brands in building a safer digital world with Sendbird's Advanced Moderation for online communities. Contact us to learn more.

Happy chat moderation! 💬✨